AI ethics guide — start here

What BrokenCtrl is, how we work, and how to get the most out of it.

AI governance accountability — documented

BrokenCtrl is a case-based AI ethics guide covering the gap between what AI companies say about ethics and governance and what they actually do. Every claim is sourced. Every case is rated. Nothing is published on the word of a press release.

The site covers corporate conduct, AI governance regulations, documented harms, militarisation of AI, and economic displacement. The editorial position is practitioner-oriented — built for people who work in risk, compliance, policy, or journalism and need analysis they can act on.

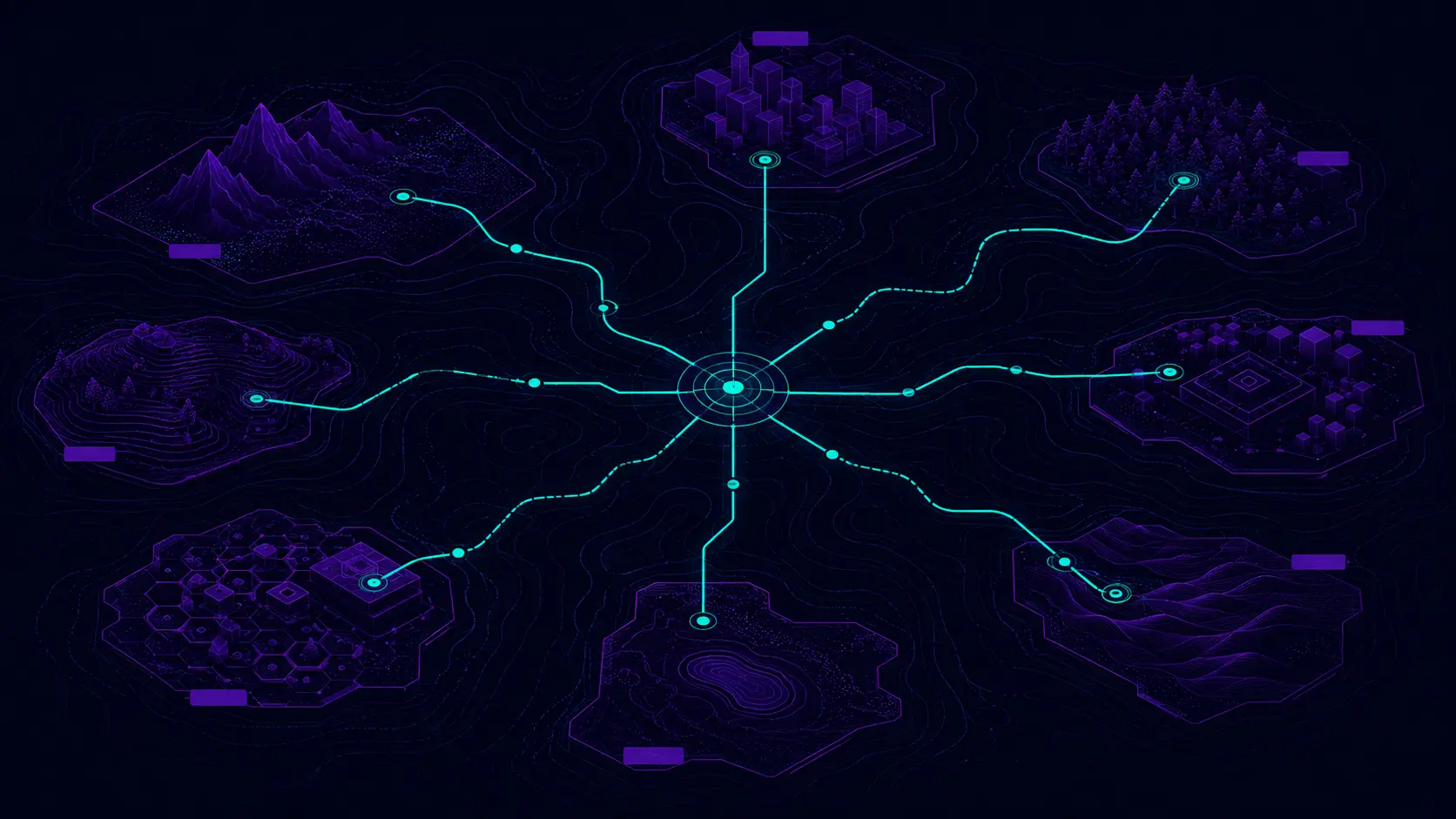

Five paths through BrokenCtrl

I want documented cases of AI failure

Start with the Cases library. Every entry uses a structured template: what happened, what's verified, what failed, and what should have been in place.

Path 02I need to evaluate an AI tool honestly

The Ethical AI Reviews section scores tools across six dimensions: transparency, privacy, safety, data governance, corporate conduct, and real-world harm evidence.

Path 03I want to understand AI governance frameworks

The Frameworks section explains the mechanisms behind AI failures — incentive structures, control gaps, deployment mismatches, and what accountability actually requires.

Path 04I need practical compliance tools

Download risk assessment templates, vendor due diligence checklists, incident report frameworks, and evidence grading rubrics — free, no fluff.

Path 05I want to understand the methodology

The About page explains how claims are verified, what the confidence labels mean, the authorship policy, and the editorial independence statement.

Three-tier confidence labelling

Every factual claim in a case study or review carries one of three labels. This is the system — not a disclaimer, a methodology.

Confirmed by primary sources — official statements, regulatory filings, peer-reviewed research, or direct documentation.

Supported by credible reporting from multiple outlets but not yet confirmed by primary documentation.

Reported but contested, based on a single source, or not yet confirmed. Included for completeness; treated as unconfirmed.

QUESTIONS

What is an AI ethics guide?

An AI ethics guide helps you understand what ethical AI means in practice — not theory, but the documented reality of how AI companies behave, what governance frameworks require, and where the gaps are. BrokenCtrl is built as a practical AI ethics guide: case studies, ethical tool reviews, governance frameworks, and templates — all sourced, rated, and updated when evidence changes.

Is BrokenCtrl affiliated with any AI company?

No. BrokenCtrl is independently operated. Some tool reviews contain affiliate links — these are labelled clearly and do not affect editorial ratings or confidence labels. The full independence statement is on the About page.

How often is content updated?

Case studies and reviews carry a "Last updated" timestamp. When new evidence changes a confidence rating or ethics score, the page is revised and the change is noted. AI governance is not static — neither is this site.

Who is behind BrokenCtrl?

BrokenCtrl is run by a player risk and compliance professional with direct experience in regulated industry risk management. The analytical approach — harm identification, risk tiering, user protection, compliance documentation — comes from that background applied to AI governance. Full background on the About page →