What is AI governance — frameworks explained

The mechanisms behind AI failures — and what accountability actually requires.

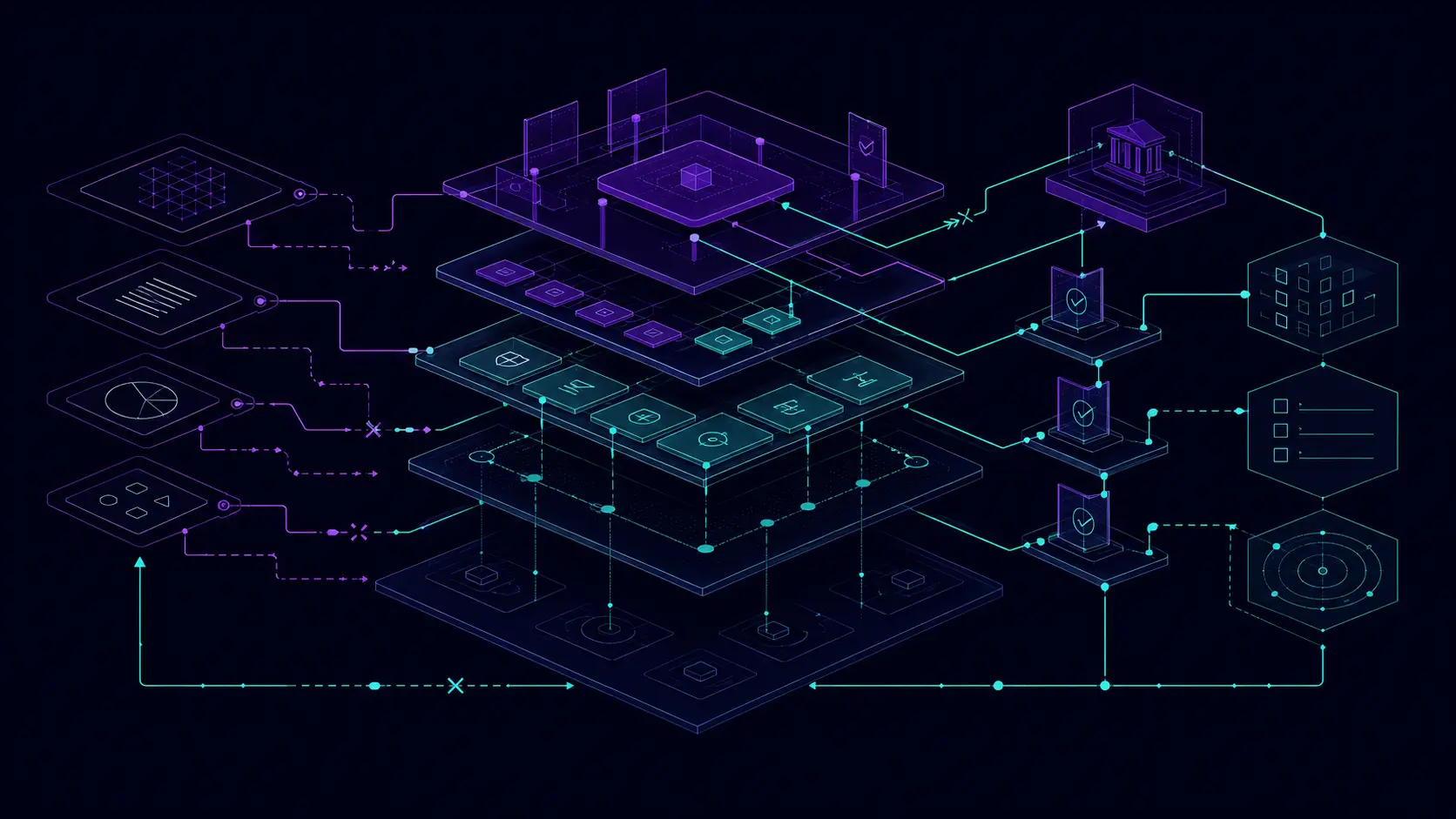

The Frameworks section explains the structural mechanisms behind AI failures — not individual incidents, but the patterns that make incidents predictable. What is AI governance? In practice it is the set of structures, incentives, and constraints that determine whether an AI company's stated commitments translate into actual behaviour. Most frameworks covered here are about why that translation fails.

Established AI governance frameworks — NIST's AI Risk Management Framework, the EU AI Act, ISO/IEC 42001, AI governance maturity models — offer structural approaches. BrokenCtrl examines where they fall short in practice, and what governance framework examples actually look like once enforcement is tested.

Framework posts are evergreen analysis. They do not report on specific events — they explain the mechanisms that events illustrate. Think of them as the analytical vocabulary for reading the Cases library.

Want to see these frameworks applied to specific tools? Ethical AI Reviews scores AI tools across six governance dimensions using the same analytical approach.

The Broken Control Loop

When incentives and power dynamics outmuscle internal safeguards, the control loop shifts outside the developer. How commercial pressure systematically overrides safety posture.

Distribution is the harm multiplier

Small safety gaps become mass harm when paired with viral distribution. Why platform integration changes the risk profile of any AI capability.

Friction as a safety feature

Rate limits, confirmation steps, and default settings are not UX decisions — they are safety controls. What happens when they are removed in the name of growth.

Policy vs enforcement

Most AI ethics commitments are policy documents. Policy without enforcement mechanisms and consequences is preference, not constraint. How to tell the difference.

Foreseeable misuse as negligence

Once a harmful use case is predictable, "we didn't expect it" is not a defence. What pre-deployment hazard analysis actually requires.

The agentic safety gap

A model that behaves safely in evaluation may not behave safely when given tools, memory, and the ability to take real-world actions. Why agentic deployment is a different risk category.

Framework posts loading — or none published yet.

QUESTIONS

What is AI governance?

AI governance is the set of rules, processes, and institutional structures that determine how AI systems are developed, deployed, and held accountable. It includes internal company policies, external regulations like the EU AI Act, and the technical controls that enforce both. BrokenCtrl focuses on the gap between stated AI governance commitments and observable conduct — where governance exists on paper but not in practice.

What is an AI governance framework?

An AI governance framework is a structured approach to managing AI risk and accountability within an organisation. Examples include the NIST AI Risk Management Framework, the EU AI Act's risk-tiering system, and internal frameworks developed by AI companies. BrokenCtrl covers both established frameworks and the analytical models needed to assess whether any framework is functioning as intended.

What are some examples of AI governance frameworks?

Major governance framework examples include the NIST AI Risk Management Framework (a voluntary US standard), the EU AI Act (binding regulation tiered by AI risk class), ISO/IEC 42001 (the international AI management system standard), and AI governance maturity models that score organisations on implementation rather than presence of policy. BrokenCtrl uses these as reference points — not to praise them as solved governance, but to measure where AI companies fall short of their own stated frameworks in practice.

What is an AI governance maturity model?

An AI governance maturity model scores an organisation's governance practices on a developmental scale — typically from ad-hoc through defined, managed, and optimised — across categories like risk management, accountability, and oversight. Maturity models differ from compliance frameworks: they measure how well governance is implemented, not just whether it exists on paper. BrokenCtrl's case library demonstrates the gap between high stated maturity and observable conduct.

How is AI governance different from AI ethics?

AI ethics describes principles and values — what AI systems should and should not do. AI governance describes the mechanisms that enforce those principles. Ethics without governance is a wish list. BrokenCtrl is primarily concerned with governance — specifically with whether enforcement mechanisms exist, whether they are technical or merely policy-based, and whether they produce consequences when violated.

Can I use these frameworks for compliance work?

The frameworks here are analytical tools for understanding AI governance failures — not compliance checklists. For practical compliance templates, visit the Templates section, which includes risk assessment frameworks, vendor due diligence checklists, and incident response structures based on the same analytical approach.